Storage vendors orbit the Nvidia sun at GTC

Hitachi Vantara and Nutanix announced support for Nvidia’s new GPUs and software at GTC 2026, much like every other storage system vendor, while IBM integrated Watsonx and other offerings more with Nvidia. Seagate demonstrated a 2-tier hybrid external KV Cache composed of SSDs and disk drives, as it did last year.

Think of all the storage companies as planets orbiting the vast Nvidia Sun, with each one trying to get as close to Nvidia as possible to gain an advantage when selling its wares to customers.

Hitachi Vantara

Hitachi Vantara's Hitachi iQ is a hardware and software portfolio designed to help enterprises deploy and operate AI infrastructure, and is built on the Virtual Storage Platform One (VSP One) storage system. It integrates accelerated computing, networking, and storage into a validated infrastructure stack, and supports Hitachi's HMAX suite of software that brings AI to social infrastructure. Hitachi iQ now supports:

- Nvidia Blackwell GPUs (air-cooled)

- Blackwell Ultra GPUs (air-cooled and liquid-cooled)

- Nvidia MGX-based system with up to four RTX PRO 6000 Blackwell Server Edition GPUs

- Hitachi iQ also plans to support the newly announced RTX PRO 4500 Blackwell Server Edition GPU

Hitachi Vantara will support Nvidia's STX reference architecture to develop AI-native storage systems running on Vera Rubin GPUs, BlueField-4 DPUs, and Spectrum-X networking, and its AI software.

The Hitachi iQ Studio software is built on Nvidia's AI Data Platform reference design and includes expanded AI blueprints and multi-agent coordination capabilities. The new blueprints introduce defined agent roles, including supervisor and worker models. Worker agents execute tasks while supervisor agents coordinate multi-agent workflows and adapt based on outcomes.

Hitachi iQ Studio also expands support for Nemotron models, large language models designed to power advanced, tool-using agentic AI systems, and introduces time machine capabilities that enable AI systems to navigate historical datasets. This time-aware intelligence strengthens explainability and supports customers that rely on long-term data patterns to inform decisions.

There is now tighter integration between Hitachi iQ Studio and Hammerspace to streamline data access for agent-driven workflows. With this expanded capability, data managed by Hammerspace can be accessed directly within Hitachi iQ Studio using Model Context Protocol (MCP), an open standard that allows AI systems to securely connect to external data sources.

Get more information here.

IBM

IBM gave us four points about its work with Nvidia:

- Accelerating structured data analytics. An open-source integration of Nvidia cuDF and IBM Watsonx.data’s SQL engine Presto will enable faster query execution on large datasets. To validate this, IBM and Nvidia applied GPU-accelerated watsonx.data to Nestlé’s Order-to-Cash data mart. Watsonx.data reduced query runtime from 15 mins to 3 mins – achieving 83 percent cost savings and an overall 30X improved price-performance.

- Unlocking the full value of enterprise data. Companies are addressing their unstructured data problem with Docling from IBM and Nvidia Nemotron open models – a combination designed to offer intelligent document extraction at enterprise scale.

- Optimizing infrastructure. Nvidia is using 10 PB of Storage Scale System 6000 for its GPU-native advanced analytics engines. For regulated industries where digital sovereignty is a factor, IBM and Nvidia are exploring the integration of IBM Sovereign Core with Nvidia infrastructure and Nemotron models.

- Advancing the enterprise AI stack. IBM is offering Blackwell Ultra GPUs on IBM Cloud for large-scale training, high-throughput inferencing, and AI reasoning. This tech will also be integrated across Red Hat AI Factory with Nvidia and VPC servers. Additionally, IBM Consulting plans to bring Red Hat AI Factory with Nvidia to clients through IBM Consulting Advantage.

Nutanix

Nutanix announced Nutanix Agentic AI, a full-stack software solution built to help customers accelerate adoption of Agentic AI.

Thomas Cornely, EVP of Product Management at Nutanix, said in a statement: "Nutanix Agentic AI extends our AHV hypervisor, Flow Virtual Networking, Nutanix Kubernetes Platform, and Nutanix Enterprise AI to deliver a cloud operating model to enterprise AI factories, enabling infrastructure and platform teams to simply build, operate, and govern AI factories while providing Agentic AI developers with the performance and rich set of models and AI platform services they need."

Nutanix Agentic AI integrates with Nvidia AI Enterprise at the Agent Builder layer and orchestrates the Nvidia-certified ecosystem of AI factories for supported configurations. It enables customers to build, run, and protect agentic AI applications with a suite of infrastructure orchestration and security software coupled with AI platform services (PaaS) and models-as-a-service (MaaS) for data scientists and agentic AI developers.

In more detail:

- Nutanix Enterprise AI (NAI) v2.6 includes an AI Gateway service for unified policy control over cloud-hosted and private LLMs. New support for the Model Context Protocol (MCP) server and fine tuning extends its existing MaaS capabilities to enable agents to securely connect to enterprise tools and data sources. NAI also supports the Nemotron family of open-source AI models, datasets, and training tools.

- Nutanix has extended its CNCF-compliant Nutanix Kubernetes Platform (NKP) with a set of pre-built open-source AI developer tools, including notebooks, vector databases, MLOps workflow engines and agentic frameworks. Due to its Nvidia AI Enterprise integration, developers can instantly deploy Nvidia NIMs, including Nemotron.

- The AHV hypervisor has been enhanced to automatically optimize allocation of physical resources to virtual machines on GPU dense servers and help maximize performance. The Flow Virtual Networking software has been enhanced to offload the network data plane to BlueField DPUs, reducing host CPU and memory consumption.

- Nutanix Unified Storage delivers linearly scalable read/write performance for thousands of GPU clients. Nutanix provides a scalable, low-latency data fabric that maximizes GPU efficiency by providing a high-capacity tier for KV Cache offloading and support for S3 over RDMA and NFS over RDMA.

Nutanix Agentic AI software comprises products that are either already generally available or currently in early access and expected to be available soon. More information about the solution can be seen here.

Seagate

Seagate demonstrated its disk JBOD (Just a Box of Disks) backing up a Supermicro KV Cache-extending SSD JBOF (Just a Box of Flash) and also mentioned its NVMe-accessed disk drive idea, but not upfront, as it had at GTC 2025.

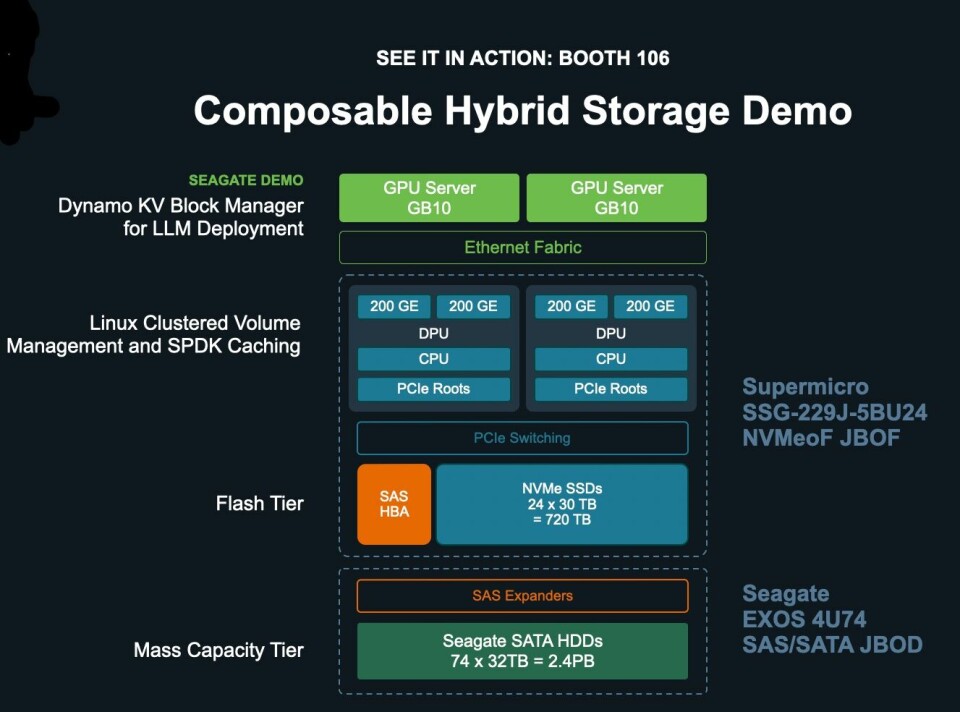

The KV Cache extension demo featured:

- Nvidia GB10 GPU cluster compute node, with Dynamo KV Block Manager for LLM deployment.

- Two BlueField-4 (BF-4) DPUs controlling NVMe-oF and caching with Linux Clustered Volume Management and SPDK Caching, Hooked up to the GPU servers over Ethernet

- Supermicro NVMe-oF BOF as high-speed networked NVMe SSD cache tier to keep immediate context close to compute, linked to the BF-4s over PCIe. 720 TB capacity from 24 x 32 TB NVMe SSDs

- SAS-connected Seagate Exos 4U74 hard drive JBOD providing 2.4 PB from 74 x 32 TB SATA Exos making up a hybrid SSD/HDD NVMe-oF target.

The multi-titled Vik Malyala, President and Managing Director, EMEA, and SVP, Technology and AI, at Supermicro, said: “Combining Supermicro’s JBOF flash tier and a Seagate’s hard drive tier can dramatically reduce inference costs while providing high performance.”

How so?

Seagate and Supermicro say a smart AI stack separates short-term memory (flash) from long-term memory (disk) and uses each tier for what it does best:

- Real-time access tiers (GPU HBM memory, CPU DRAM, local and network NVMe SSDs): handle the “right now” context — active tokens, hot embeddings and frequently accessed data

- Capacity tiers (built from hard drives): hold “long horizon” context — large datasets, long-lived histories and extended agent memory

There is automated data placement over all tiers, with DPUs to coordinate data movement and caching across tiers, and you use less expensive flash this way, as “Hard drives deliver dramatically lower cost per GB for long-term memory.”

A chart shows a test result showing virtually no difference between the hybrid SSD/HDD cache and a local NVMe SSD in terms of time to first token;

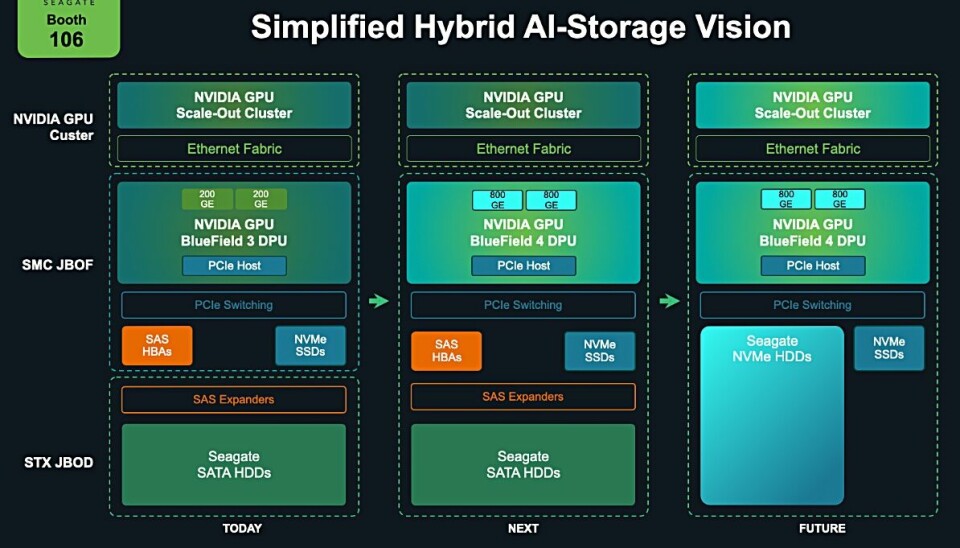

Seagate says its system scales out and can accommodate updated components;

Its slide shows a jump from BlueField-3 today to BlueField-4 some time ahead, and then from SATA to NVMe-connected disk drives in the future. This contrasts with a similar demo at GTC 2025 in which it used prototype NVMe disk drives.

They are simply not needed yet, despite Seagate then pointing out that NVMe hard drives remove the need for HBAs, protocol bridges, and additional SAS infrastructure, and provide a unified NVMe architecture streamlining AI storage.

This is a demo and there is no actual Seagate/Supermicro combined JBOF/JBOD/DPU product. If KV Cache extension becomes widespread and if NAND prices continue and sustain their price rise and supply shortage, then Seagate maybe has a way to sell HDDs into the KV Cache extension market. Full marks for trying.